Source: Image by Jema stock on Freepik

Introduction

In today’s data-driven world, businesses generate and store an enormous amount of data every day. Data is the backbone of every business and is critical to making informed decisions.

However, as the data grows, the database management systems are subjected to a significant amount of load, leading to the system’s slow down or even crashing.

Load balancing is an effective technique that can help distribute the load across multiple servers, ensuring that the database can handle the increased demand without slowing down or crashing.

In this blog post, we will explore the concept of load balancing in database management systems, its importance, and its various aspects.

What is Load Balancing?

Source: Image by Grommik on Freepik

Load balancing is a technique that distributes the workload across multiple servers to prevent any one server from being overloaded. In a database management system, load balancing can be achieved by distributing the queries across multiple servers.

This approach ensures that each server handles only a fraction of the total workload, resulting in improved performance, scalability, and availability.

Load balancing is essential for ensuring that a database management system can handle high volumes of data with ease.

By distributing the workload across multiple servers, load balancing ensures that the system can handle the increased demand without slowing down or crashing, providing uninterrupted access to the data.

Types of Load Balancing

There are two types of load balancing techniques: hardware-based and software-based.

Hardware-based load balancers are custom-designed physical devices installed between the security system and the server infrastructure. They are known for their high throughput, low latency, and advanced features such as SSL (Secure Sockets Layer) offloading and DDoS (distributed denial of service) protection.

Software-based load balancers are virtual appliances that run on standard hardware, installed on servers or in virtual machines. They are known for their flexibility and ease of deployment on existing infrastructure, making them a cost-effective choice that can be easily scaled up or down depending on demand.

The choice between hardware-based and software-based load balancers depends on the specific needs of the organization and its IT infrastructure.

Hardware-based load balancers are ideal for high-performance and high-budget environments, while software-based load balancers are a better choice for smaller or more dynamic environments.

Load Balancing Algorithms

Source: Image by Starline on Freepik

Load balancing algorithms determine how the workload is distributed across servers.

There are several load balancing algorithms available, including round-robin, least connections, IP hash, and weighted round-robin:

1. Round Robin: Requests are distributed across the group of servers sequentially.

2. Least Connections: A new request is sent to the server with the fewest current connections to clients. The relative computing capacity of each server is factored into determining which one has the least connections.

3. Least Time: Sends requests to the server selected by a formula that combines the fastest response time and fewest active connections. This algorithm is exclusive to NGINX Plus.

4. Hash: Distributes requests based on a key you define, such as the client IP address or the request URL. NGINX Plus can optionally apply a consistent hash to minimize redistribution of loads if the set of upstream servers’ changes.

5. IP Hash: The IP address of the client is used to determine which server receives the request.

6. Random with Two Choices: Picks two servers at random and sends the request to the one that is selected by then applying the Least Connections algorithm (or for NGINX Plus, the Least Time algorithm if configured to do so).

Benefits of Load Balancing

Load balancing provides several benefits to organizations, including:

- Improved System Performance

- Scalability

- Availability

By distributing the workload across multiple servers, load balancing ensures that the system can handle the increased demand without slowing down or crashing. This provides uninterrupted access to the data, improving the overall user experience.

Load balancing also makes it possible for organizations to scale their systems as their data grows.

By adding more servers to the system, organizations can distribute the workload across multiple servers, ensuring that the system can handle the increased demand without slowing down or crashing.

Cloning And Its Importance in Load Balancing Configuration for High Availability

Source: Image by GarryKillian on Freepik

Cloning is the process of creating an exact copy of a server or database instance.

In the context of load balancing, cloning is important for achieving high availability. By cloning servers, an organization can ensure that there are multiple instances of the database available to handle the workload.

If one server fails, the cloned server can take over, ensuring that the system remains available and accessible to users.

Cloning can be achieved through various methods, including physical cloning and virtual cloning.

Physical Cloning Vs Virtual Cloning

Physical cloning involves creating a copy of the server using physical hardware, while virtual cloning involves creating a copy of the server using virtualization technology.

Cloning is an essential part of load balancing configuration for high availability. By ensuring that there are multiple instances of the database available to handle the workload, organizations can ensure that their critical data is always available and accessible to their users.

This improves the overall user experience and ensures that the organization can meet its data needs, no matter how large the workload.

Software Load Balancers vs Hardware Load Balancers: Which is Better for Your DB System?

Source: Image by rawpixel.com on freepik

When it comes to load balancing in database management systems, there are two types of load balancing techniques: software-based and hardware-based.

Both techniques have their pros and cons, and choosing the right one for your organization depends on your specific needs and workload requirements.

Software-Based Load Balancers

Software-based load balancers involve using software to distribute the workload across servers.

Using software is more flexible and cost-effective than hardware-based load balancing but may not offer the same level of performance.

Software-based load balancers are ideal for smaller or dynamic organizations that do not have the budget or for larger organizations.

They are also suitable for organizations that need to balance the workload across cloud-based servers.

Hardware-Based Load Balancers

Hardware-based load balancers involve using a dedicated hardware device to distribute the workload across servers.

Hardware is more expensive than software-based load balancing but offers better performance and scalability.

Hardware-based load balancers are ideal for organizations that handle big data and require high performance and scalability.

They are also suitable for organizations that need to balance the workload across on-premises servers.

Which is Better?

The choice between software-based and hardware-based load balancing depends on your organization’s specific needs and workload requirements.

If you are a smaller organization with a limited budget and workload, a software-based load balancer may be the best option for you.

If you are a larger organization with a high workload and need for performance and scalability, a hardware-based load balancer may be the best option.

It is important to consider the total cost of ownership, including maintenance and support, when choosing between software-based and hardware-based load balancing.

Additionally, it is important to consider your organization’s future growth and scalability needs when making the decision.

A Closer Look at Clustering in DB Systems: Standard Vs Enterprise Clustering

Clustering is a technique used in database management systems to improve availability and performance by grouping multiple servers together.

Standard clustering involves grouping servers together for failover purposes, while enterprise clustering involves grouping servers together for performance and scalability.

Advantages And Disadvantages of Standard and Enterprise Clustering

The advantages of standard clustering include improved availability and failover capabilities, while the advantages of enterprise clustering include improved performance and scalability.

The disadvantages of standard clustering include limited scalability and performance, while the disadvantages of enterprise clustering include increased complexity and cost.

Best Practices for Implementing and Managing Load Balancers

Source: Image by rawpixel.com on Freepik

Implementing and managing load balancers in database management systems requires careful planning and execution.

Here are some best practices to ensure that load balancers are implemented and managed effectively:

- Define Your Goals

Before implementing load balancers in your database management system, define your goals.

Determine what you want to achieve with the load balancer, such as improved system performance, scalability, and availability. This will help you choose the right load balancing technique and algorithm for your needs.

- Choose the Right Load Balancing Technique

Choose the right load balancing technique for your needs. Hardware-based load balancing is ideal for organizations that handle substantial amounts of data and require exceptional performance and scalability.

In contrast, software-based load balancing is suitable for smaller organizations that do not require the same level of performance and scalability as larger organizations.

- Choose the Right Load Balancing Algorithm

Choosing the right load balancing algorithm is crucial for optimal system performance. Consider your specific needs and workload requirements when selecting a load balancing algorithm.

For example, if your organization handles many requests from a single IP address, then IP hash may be the best algorithm to use.

- Monitor Your System

Monitor your system to ensure that load balancers are working effectively.

Regularly check system performance metrics, such as response time and server utilization, to identify any issues that may be impacting system performance.

- Test Your Load Balancers

Test your load balancers to ensure that they are working effectively.

Use load testing tools to simulate high loads and identify any performance issues that may need to be addressed.

- Implement Redundancy

Implement redundancy to ensure that load balancers do not become a single point of failure.

Use multiple load balancers and configure them in a failover configuration to ensure the system can continue operating in case of a failure.

By following the above best practices, organizations can ensure that their load balancers are implemented and managed effectively, improving system performance, scalability, and availability.

Troubleshooting Common Issues with Load Balancers and Clustering in DB Systems

Source: Image by Storyset on Freepik

Load balancers and clustering are essential components of database management systems, but they are not without their challenges.

Here are some common issues that organizations may encounter when implementing load balancers and clustering, along with some troubleshooting tips:

- Network Latency

Network latency can be a significant issue in load balancing and clustering.

Latency occurs when there is a delay in data transmission between servers, which can lead to slow performance and decreased system availability.

To troubleshoot network latency issues, organizations should:

- Optimize network settings to reduce latency

- Use caching and compression to reduce data transmission times

- Use a high-speed, low-latency network connection

- Database Inconsistencies

Load balancing and clustering can sometimes cause database inconsistencies, where data on one server is not synchronized with data on another server.

This can lead to incorrect or inconsistent results, which can impact system performance and user experience.

To troubleshoot database inconsistencies, organizations should:

- Use a database replication solution to keep data coordinated across servers

- Implement a failover mechanism to ensure that the system can continue to operate in the event of a failure

- Monitor the system for inconsistencies and address them as soon as possible

- System Overload

Load balancing and clustering can also cause system overload, where the system is unable to handle the workload and crashes.

This can occur when the workload is too high or when the load balancer or clustering software is not configured correctly.

To troubleshoot system overload issues, organizations should:

- Monitor system performance metrics, such as response time and server utilization, to identify any issues that may be impacting system performance

- Use load testing tools to simulate high loads and identify any performance issues that may need to be addressed

- Configure load balancing and clustering software correctly.

Best Practices for Troubleshooting Load Balancers and Clustering in DB Systems

- Monitor system performance metrics, such as response time and server utilization, to identify any issues that may be impacting system performance

- Use load testing tools to simulate high loads and identify any performance issues that may need to be addressed

- Use a database replication solution to keep data coordinated across servers and prevent database inconsistencies

- Implement a failover mechanism to ensure that the system can continue to operate in the event of a failure

- Optimize network settings to reduce latency and improve system performance

- Configure load balancing and clustering software correctly to prevent system overload and other performance issues

Why Work With us

We are a software-based load balancer provider, and we are passionate about helping businesses improve their website and application performance.

Load balancing is a critical component of any modern IT infrastructure, as it ensures that web traffic is distributed evenly across multiple servers. This not only prevents downtime and ensures high availability, but it also improves website speed and user experience.

At Finsense Africa, we pride ourselves on providing reliable, efficient, and cost-effective load balancing solutions to businesses of all sizes.

Our team of experts is dedicated to helping you improve your system performance, so you can focus on growing your business. Contact us today to learn more about our services and how we can help you.

Sources

- What is Load Balancing?

Summary

The article talks about load balancing in database management systems. The system becomes slow or crashes when subjected to a significant amount of load, which is prevented by load balancing.

Load balancing distributes the workload across multiple servers, resulting in improved performance, scalability, and availability. There are two types of load balancing techniques – hardware-based and software-based.

Load balancing algorithms such as round-robin, least connections, IP hash, and weighted round-robin are available. Load balancing provides several benefits, including improved system performance, scalability, and availability.

Cloning is the process of creating an exact copy of a server or database instance and is essential for high availability. Physical cloning and virtual cloning are two methods of cloning.

Choosing the right type of load balancer for an organization depends on its specific needs and workload requirements.

]]>

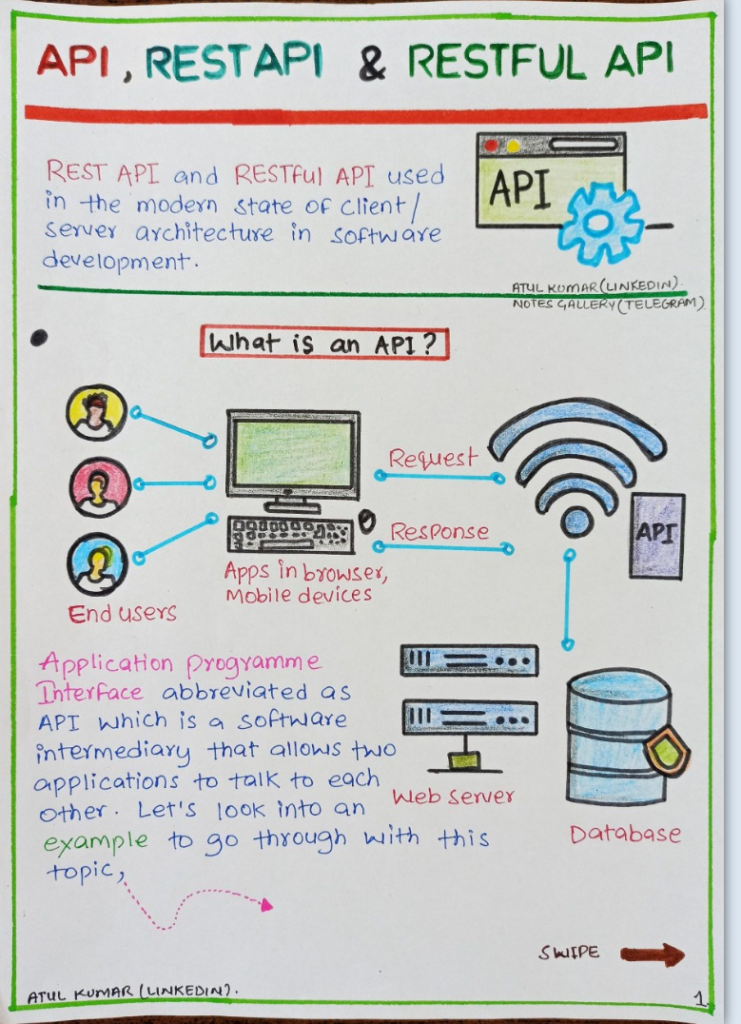

Image by Freepik

API stands for Application Programming Interface. This is a set of protocols, routines, and tools that enable software applications to communicate with each other.

What are APIs?

In simpler terms, an API is a messenger that takes requests and returns responses between different software systems.

APIs provide developers with a way to access the functionality of one software system from another, without having to know the details of how that system works internally.

APIs are typically built using web technologies, such as HTTP and XML, and are commonly used in web development and software engineering.

How do APIs Work?

They allow developers to build software that can interact with other software and services, making it possible to create more complex and integrated systems.

APIs can also be used to expose functionality to third-party developers, allowing them to build applications that leverage the features of a particular service or platform.

APIs can work in a variety of ways, but the most common method is through a request-response cycle. A client sends a request to an API endpoint, which is processed by the API server, and then returns a response back to the client. The response can be in a variety of formats, including JSON, XML, or HTML.

APIs can also use different types of authentication mechanisms to ensure that only authorized users can access the API. This can include using API keys, OAuth tokens, or other forms of authentication.

Overall, APIs are an essential part of modern software development and enable developers to build more powerful and interconnected applications.

How APIs are Used in Web Development and Software Engineering

APIs are used in a variety of ways in web development and software engineering.

APIs in Web Development

Image by Freepik

They can be used to integrate different systems and services, expose functionality to third-party developers, or create more complex and dynamic user experiences.

One common use of APIs in web development is to integrate third-party services into a web application. For example, a website might use the Google Maps API to display maps and location-based information.

APIs can also be used to integrate with social media platforms, such as the Twitter or Facebook APIs, to allow users to share content or log in to an application using their social media accounts.

APIs can also be used to create more dynamic user experiences. For example, a website might use the YouTube API to embed videos directly on a page or use the Spotify API to play music within an application.

APIs in Software Engineering

In software engineering, APIs are used to create more modular and reusable code.

By exposing functionality through an API, developers can build components that can be used across different applications or services. This can help reduce development time and improve code quality, as well as make it easier to maintain and update software systems.

Overall, APIs are a powerful tool in web development and software engineering, enabling developers to build more complex and integrated applications.

The Different Types of APIs: RESTful APIs and SOAP APIs

There are many different types of APIs, but two of the most common are RESTful APIs and SOAP APIs.

RESTful APIs

REST, or Representational State Transfer, is an architectural style for building web services.

RESTful APIs use HTTP methods, such as GET, POST, PUT, and DELETE, to access and manipulate resources. Each resource is identified by a unique URL, and data is typically returned in JSON or XML format.

SOAP APIs

SOAP, or Simple Object Access Protocol, is a protocol for exchanging structured information between web services. SOAP APIs use XML to encode messages and rely on a set of standards for exchanging information, such as the Web Services Description Language (WSDL) and the Simple Object Access Protocol (SOAP).

While both RESTful and SOAP APIs can be used to build web services, RESTful APIs are generally considered to be more flexible and easier to use. RESTful APIs also tend to be more lightweight and scalable, making them a popular choice for building modern web applications.

Best Practices for Designing and Developing APIs for Scalability and Performance

Image by Freepik

Designing and developing APIs requires careful consideration of scalability and performance.

Here are some best practices to follow:

- Keep it Simple: Design your API to be simple and intuitive. Use standard HTTP methods and response codes and limit the number of endpoints.

- Consider Caching: Use caching to improve performance by storing frequently accessed data in memory.

- Plan for Scalability: Use horizontal scaling techniques to ensure your API can handle increased traffic. This can include using load balancers, caching, and database sharing.

- Optimize Database Queries: Optimize your database queries to reduce load times and improve performance.

- Use Asynchronous Processing: Use asynchronous processing to improve performance by offloading processing tasks to a background thread or server.

- Document Your API: Make sure to document your API thoroughly, including endpoints, parameters, response codes, and error messages.

- Version Your API: Version your API to allow for changes and updates while maintaining backward compatibility for existing clients.

By following these best practices, you can ensure that your API is scalable and performs well, even as your user base grows.

The Benefits of Using APIs

- Increased Productivity and Improved User Experiences: Using APIs offers many benefits to developers and users, including increased productivity and improved user experiences.

- Faster Development: By leveraging existing APIs, developers can save time and effort by not having to build everything from scratch.

- Integration with Existing Systems: APIs allow developers to integrate new applications and services with existing systems, making it easier to build more complex and integrated systems.

- Improved User Experiences: APIs can be used to create more dynamic and personalized user experiences, such as embedding videos or displaying location-based information.

- Access to Data: APIs can provide developers with access to data from other services and platforms, such as social media data or weather data.

- Increased Efficiency: APIs can automate tasks and streamline workflows, increasing efficiency and reducing manual labor.

Overall, using APIs can help developers build more powerful and integrated applications, while also improving the user experience.

Examples of Popular APIs: Google Maps API and the Twitter API

There are many popular APIs available for developers to use, including the Google Maps API and the Twitter API.

The Google Maps API allows developers to add maps and location-based information to their applications. This can include displaying maps, calculating routes, and searching for nearby places.

The Twitter API allows developers to access and interact with Twitter data, including tweets, hashtags, and user profiles. This can be used to create custom Twitter applications, or to integrate Twitter data into existing applications.

Other popular APIs include the Facebook API, the YouTube API, and the Amazon Web Services API.

How to Integrate APIs into Your Web Application or Software Project

Image by Freepik

Integrating APIs into your web application or software project can be done in several ways:

- Using SDKs or Libraries: Many APIs provide SDKs or libraries for different programming languages, making it easier to integrate them into your project.

- RESTful APIs: RESTful APIs can be accessed using standard HTTP requests, making it easy to integrate them into web applications.

- Webhooks: Webhooks can be used to receive real-time updates from APIs, allowing you to respond to events as they occur.

- OAuth: OAuth can be used to authenticate users and grant access to APIs, allowing you to control who can access your API.

The above methods guide you to integrate APIs into your web application or software project.

The Importance of API Documentation and How to Create Effective Documentation for Your API

API documentation is critical for helping developers understand how to use your API. Here are some tips for creating effective API documentation:

- Use Clear and Concise Language: Use clear and concise language to describe each endpoint, parameter, and response code. Make sure that your documentation is easy to understand and follow.

- Provide Examples: Provide examples of how to use each endpoint, including sample requests and responses. This can help developers understand how to use your API more effectively.

- Keep It Up to Date: Make sure to keep your documentation up to date as you make changes to your API. This can help prevent confusion and errors when using your API.

- Use a Standard Format: Use a standard format for your documentation, such as Open API or Swagger. This can make it easier for developers to integrate your API into their projects.

Ultimately, you can create effective documentation for your API that can help developers use it more effectively.

Security Considerations When Using and Developing APIs, Including Authentication and Access Control

Image by Freepik

Security is a critical consideration when using and developing APIs. Here are some security considerations to keep in mind:

- Use HTTPS: Use HTTPS to encrypt data sent between the client and server. This can help prevent data breaches and man-in-the-middle attacks.

- Use Authentication: Use authentication to verify the identity of the user accessing your API. This can help prevent unauthorized access and protect sensitive data.

- Use Access Control: Use access control to restrict access to certain endpoints and resources. This can help prevent unauthorized access and protect sensitive data.

- Use Rate Limiting: Use rate limiting to limit the number of requests that can be made to your API over a period. This can help prevent denial-of-service attacks and improve performance.

Security considerations, you protect your APIs and the data they access.

You can take it a step further and secure your source code. This is the motivation behind DevSecOps. You embed security in your DevOps processes (CI/CD, and SDLC).

Tips for Troubleshooting Common Issues When Working with APIs

Image by Freepik

Working with APIs can sometimes result in issues or errors. Here are some tips for troubleshooting common issues:

- Check the API Documentation: Check the API documentation to make sure that you are using the correct endpoints, parameters, and response codes.

- Check for Errors: Check for errors in your code or requests, such as incorrect syntax or missing parameters.

- Check the API Status: Check the API status to see if there are any known issues or downtime.

- Test Your Code: Test your code in a controlled environment to see if the issue is specific to your code or a more general issue with the API.

Troubleshooting and resolving common issues when working with APIs is an essential part of API management.

How to Use API Analytics to Measure Usage and Performance and Make Data-Driven Decisions

Image by Freepik

API analytics can help you measure usage and performance and make data-driven decisions. Here are some tips for using API analytics:

- Track Usage: Track usage metrics, such as the number of requests made, the number of active users, and the types of requests being made.

- Monitor Performance: Monitor performance metrics, such as response times, error rates, and latency.

- Analyze User Behavior: Analyze user behavior to understand how users are using your API and identify any patterns or trends.

- Make Data-Driven Decisions: Use the data you collect to make data-driven decisions about how to improve your API and meet the needs of your users.

By using API analytics, you can gain insights into how your API is being used and make data-driven decisions to improve performance and meet the needs of your users.

The future of APIs and emerging trends: micro-services and serverless computing.

The future of APIs is exciting, and it is constantly evolving as new technologies emerge.

One of the most significant trends in API development is the shift towards micro-services and serverless computing.

What are Micro-services?

Micro-services are an architectural approach to building software applications that involves breaking down a monolithic application into smaller, independent services that can communicate with each other via APIs.

This approach allows developers to build and maintain software applications more easily, as each service can be developed, tested, and deployed independently.

Micro-services also offer better scalability and flexibility, as individual services can be scaled up or down depending on the workload.

What is Serverless Computing?

Serverless computing, on the other hand, is a model where the cloud provider takes care of the infrastructure required to run the code, and developers only need to worry about writing the code.

Serverless computing is becoming increasingly popular because it offers better scalability, lower costs, and faster time to market.

APIs are a crucial part of serverless computing, as they allow developers to connect different services and functions.

Artificial Intelligence and Machine Learning

Image by Freepik

Another trend in API development is the increased use of artificial intelligence and machine learning. APIs that leverage AI and machine learning can help automate tasks, make predictions, and provide personalized recommendations.

For example, an e-commerce website could use an API to analyze customer data and provide personalized product recommendations based on their browsing and purchase history.

API Design and Documentation

In addition to these trends, there is also a growing focus on API design and documentation.

Developers are realizing that well-designed APIs that are easy to use and understand can greatly improve productivity and reduce development time.

Effective documentation is essential for developers who need to integrate an API into their project, as it provides detailed information on the API’s functionality, usage, and potential errors.

How We Can Help

As the world becomes more interconnected and digital, APIs will continue to play an essential role in software development.

The future of APIs is exciting, and developers who keep up with the latest trends and technologies will be well-positioned to create innovative and impactful solutions.

Finsense Africa is a seasoned technology company, conversant with the latest technologies. Aside from building customized applications, we build a roadmap and consult on industry best practices for API governance, API monetization and API security.

Contact us today and let’s have a conversation.

]]>

Source: Image by Gerd Altmann from Pixabay

Cloud computing is an essential aspect of business operations in the modern world, and the banking industry is no exception. It offers banks a flexible and cost-effective approach to deliver IT services. Hence enabling banks to focus on their core business activities.

Banks use the cloud to reduce capital and operational expenditure, improve efficiency, and deliver better services to their customers.

In Africa, cloud computing gained popularity among banks due to its ability to overcome the region’s infrastructure limitations.

African Banks face technology infrastructure challenges, such as power outages, limited connectivity, and outdated technology. These challenges have made it difficult for banks to offer quality services to their customers. As a result, cloud computing became an attractive solution.

Additionally, cloud computing saves money, associated with purchasing and maintaining on-prem hardware and software.

What to Move to the (Public) Cloud

Source: Image by Gerd Altmann from Pixabay

The first rule of cloud migration is to only migrate what makes sense.

First, identify what services or applications to move to the cloud. Banks in Africa identified non-critical and non-sensitive applications, such as email, document sharing, and customer relationship management (CRM), as good starting points.

This cautious approach allows banks to enjoy the benefits of cloud computing while minimizing the risks associated with migrating sensitive applications. Moving non-critical applications to the cloud can provide immediate benefits.

These applications shouldn’t have customer data and shouldn’t disrupt the bank’s operations. Once the bank has gains experience with these risk-averse applications, it has confidence to gradually move more critical applications to the cloud.

For example, First Bank of Nigeria initially moved its non-core applications to the cloud before migrating its core banking applications. However, refer to your local regulators; they’re the source of policy on data cloud migration.

Services and Features That Typically Get Migrated

- DATA: Static, SQL, NoSQL, File-stores

- NETWORK: Topology, VNETS, Subnets, LB4/7, Firewalls, VPNs, Routers, Connectivity, etc.

- COMPUTE: Applications and servers.

- DEVOPS: Build, Test, and Deployment processes.

- SECURITY: WAFs, Security groups, isolation, peering, secrets, keys, certificates, monitoring, etc.

- BUDGET: Controls and Monitoring

- OBSERVABILITY and SRE: Monitoring, Logging, Tracing, Alerts, Incident Management, Patching, etc.

- IAM: Users, Roles, Groups, Privileges, AD/LDAP, etc.

- CONTROL: Management and Oversight.

Migration Use Cases— When Do Businesses Migrate?

Migration use cases are specific scenarios or situations where businesses may need to migrate their IT infrastructure and services to the cloud.

These scenarios can vary depending on the type of business and the current state of their IT infrastructure.

Various factors can drive cloud migration and technical scenarios, including:

- Data

- Infrastructure

- Applications

Data Migration

- Static Data: BLOB (Binary Large OBject or basic large object) migrate using online transfer such as UPLOAD, RSYNCH (remote sync) methods and offline methods like transfer appliances or archival media depending on their size.

- File Data: Files and Directory data migrate using online transfer such as SMD (Signed Mark Data), UPLOAD, RSYNCH, and offline methods like transfer appliances or archival media depending on its size.

- (No)SQL Data: Database data should be exported and imported and “synching” through ETL methods, migration utilities, database file transfer, and virtualization lift and shift.

Infrastructure and Application Migration

It is usually the most manually intensive, requiring mapping physical infrastructure and topology to cloud provider-specific IaaS.

A typical approach might be the computer service migration which migrates application servers, applications, and server clusters to the cloud.

It is not just about migrating infrastructure but also refactoring service architectures for the cloud as well.

Common approaches are:

- Rehost: this is a lift and shift approach used to mirror existing servers “as-is” to the cloud. You virtualize the on-premises host and upload images to the cloud. Then, you create instance groups from those cloud image(s). In this case, you can use on-prem migration utilities from some cloud providers to guide the process. This is the easiest migration to do but does not take full advantage of cloud architecture.

- Re-platform: This is the migration of services to similar cloud-native technologies. For instance, application services like web servers, web APIs, and REST applications can be dockerized and hosted on container engines like Kubernetes. Then, you deploy them directly onto cloud-managed application sandboxes. However, application binary is not refactored, it is deployed “as-is” to a cloud-managed service.

- Refactor: Refactoring is about refactoring application architecture to fit the best available cloud services. This approach is dependent on architecture but would most likely include things like:

- Decomposing application logic into appropriate microservices or macro services

- Dockerizing and deploying to container services like Kubernetes

- Using native messaging and event services to provide inter-service communication

Containerization and Kubernetes

Docker and Kubernetes are two critical technologies everyone needs to know to benefit from migrating applications to the cloud.

Containerization

Docker is a tool that lets you create small and portable packages of all the software that your application needs to run. These packages are called “images.”

Once you create an image, you can run it on any computer that supports Docker. This makes it easy to move your application from one computer to another.

Docker images are much smaller than traditional virtual machine images, and they are easy to scale up or down. This means you can run your application on many different computers at once.

Docker is an important tool for modern cloud-based applications, but it can be used on any computer.

Kubernetes

One of the most popular container orchestrators is called Kubernetes, and it’s used by a lot of companies to help them run their applications on the cloud.

This type of tool is important when running your applications on a cloud platform. This is because it can help you take advantage of things like scalability (being able to easily add more resources when you need them), managed services (where the cloud provider takes care of some of the work for you), and cost savings.

Source: Cloud Migration Strategy by Alexander Wagner

Planning

Source: Image by Gerd Altmann from Pixabay

Cloud migration involves moving data, infrastructure, and applications to managed cloud services, such as infrastructure as a service (IaaS) or platform as a service (PaaS).

To ensure a successful cloud migration strategy, businesses must consider technical and business objectives and prioritize incremental migration based on business priorities.

They can use “rehost,” “re-platform,” or hybrid cloud strategies to minimize the work involved in the migration process.

Refactoring migrated services to optimize their use of the cloud is not always necessary, but it can be done gradually in small bits to gain benefits.

Becoming cloud-native is crucial for business success, as cloud-native services are designed to take advantage of the features and capabilities of the cloud, such as scalability, high availability, and elasticity.

Strategies for cloud migration include the 6-Rs approach:

- Rehost (Lift and shift): virtualize and move services “as is” to the Cloud. They are usually used for proprietary application services like web or on-premises applications.

- Re-platform: move on-prem services “as is” to the Cloud using cloud-native alternatives—e.g., cloud-managed databases. It can also include moving to PaaS services.

- Repurchase: move services (where available) to a SaaS offering.

- Refactor: partially or fully redesign the service architecture best to use cloud-native services, e.g., microservices.

- Retain: keep some services—usually legacy or highly custom backends—where they are.

- Retire: replace existing services with cloud-native services as much as possible.

It is essential to monitor and manage the cloud environment throughout the entire project, which typically includes several key stages, such as:

- Discovery- defines the business and technical case/scope, plus assets to migrate (scope of migration, business due diligence, technical due diligence, and Asset and discovery)

- Assessment– plans the migration and see potential methods of execution (business assessment technical assessment and poc(s) migration and backlog and MVP migration planning and approval)

- Migration- runs the planned migration steps, both technical and organizational (Technical Migration, Organization Migration (Processes and Structures), Stabilization, Prep handover to SRE/OPs)

- State Running- runs team maintenance going forward (SRE/DevOps team(s) manage as Business Impacts

Like any organizational change, potential impacts need to be considered. The impact depends on the adopted approach and the migrated or transformed applications’ architecture.

Positive

- Cheaper Costs: Customers only pay for what they use (metered service).

- Managed Services: Patching, upgrades, availability, etc., of services, are managed for customers.

- Elasticity: Services can scale automatically based on demand.

- SRE (Site reliability engineering): Supporting technologies like monitoring, tracing, DRaaS, etc., are provided for customers and can be more closely integrated out of the box.

- Control: Customers can choose the level of control that they want using IaaS and PaaS.

- Empowerment: Teams can potentially own their services all the way into production.

Negative

- Reskilling: Organizational reskilling is required for IT functions.

- Control: There is some loss of control over the environment.

- Processes: Business practices and structures may have to change or adapt to better support the Cloud (DevOps and Ops/SRE).

- Refactoring: Applications and services may need to be refactored to better use “modern” architectural patterns.

- SRE: Existing solutions for monitoring and recovery may need to be replaced as well, as the increasing cost.

- Downtime: Downtime for the migration might be an issue.

What to Leave On-Prem (Private Cloud)

The second rule of cloud migration is migration can be done incrementally.

Although cloud computing offers numerous benefits to banks, some processes are better left on-prem. Processes that require high levels of security or regulatory compliance, such as payment processing or Know Your Customer (KYC), should remain on-prem.

This ensures that sensitive information is not compromised while providing banks with the benefits of cloud computing. After all local African cybersecurity policy and enforcement is underdeveloped.

The third rule of cloud migration is hybrid cloud approaches allow the “best of both worlds.”

However, banks can still leverage the cloud’s benefits by implementing hybrid cloud solutions, where sensitive applications are kept on-prem while non-sensitive applications are moved to the cloud.

Navigating Compliance

Banks in Africa adhere to strict regulatory requirements. Therefore, compliance is a critical part of cloud computing. Banks must ensure their cloud providers meet the required regulatory standards and compliant with local and international laws.

For example, the Nigerian Data Protection Regulation (NDPR) requires banks to store customer data within the country’s borders. Banks must, therefore, partner with cloud providers within Nigeria to comply with the NDPR.

Another example is, the South Africa Protection of Personal Information Act 4 of 2013 requires cloud providers to implement appropriate security measures to protect personal data.

Other Commonwealth countries like Kenya borrowed their cloud policies from the UK, General Data Protection Regulation (GDPR). Banks’ customers in those countries have more control over their data.

Monitoring Cloud Performance

Source: Image by Gerd Altmann from Pixabay

As African banks continue to adopt cloud technology, it’s crucial that they establish effective monitoring practices. These will ensure the security and efficiency of their private and hybrid cloud environments.

Auditing access controls, user activities, and system configurations for their private clouds can prevent unauthorized access or data breaches.

Also, they should manage data transfers between on-premises and cloud environments. Monitoring performance metrics such as response times and availability are also essential for hybrid clouds. This practice maintains data integrity and complies with regulatory requirements.

For instance, a bank that uses a hybrid cloud might need to monitor data transfers between its on-premises financial system (FS) and its cloud-based customer relationship management system CRM) to ensure the privacy and security of sensitive financial information.

Automated tools and trained personnel can help with monitoring and promptly responding to incidents, thereby reducing risks and maximizing the benefits of cloud technologies.

Security

Source: Image by ambercoin from Pixabay

Cybercrimes affect all countries, but weak networks and security make countries in Africa particularly vulnerable.

Throughout the entire project, it is essential to continuously monitor and manage the cloud environment to ensure that it remains secure, cost-effective, and aligned with the business objectives.

Monitoring involves ongoing maintenance and support, as well as periodic optimization and updates to the infrastructure and applications. The typical methodology for a cloud migration involves several key stages, including:

- Analysis of technical and business needs

- Planning and evaluation of resources

- Design and construction

- Testing

- Migration

- Monitoring

While Africa has an estimated 500 million Internet users- 38% of the total population- leaving huge potential for growth. Additionally, Africa has the fastest growing telephone and Internet networks in the world and makes the widest use of mobile banking services.

This digital demand, coupled with a lack of cybersecurity policies and standards, exposes online services to major risks.

As African countries move to incorporate digital infrastructure into all aspects of society – including government, banking, business and critical infrastructure. Therefore, it is crucial to put a robust cybersecurity framework into place.

“Not only do criminals exploit vulnerabilities in cyber security across the region, but they also take advantage of variations in law enforcement capabilities across physical borders,” said Craig Jones, INTERPOL’s Director of Cybercrime.

Importance of Localized Support

Source: Image by John Hain from Pixabay

Banks in Africa require local support from their cloud providers to address the region’s unique challenges.

Local support ensures that the cloud provider understands the local regulatory requirements, infrastructure challenges, and cultural differences.

Cloud providers must also offer local language support and have a local presence to provide quick response times. For example, Oracle and Microsoft, a cloud provider, have established a data center in South Africa

How Can we Help?

Banks across Africa are adopting various digital transformation trends to enhance scalability and streamline core operational areas.

Many businesses are unaware of cloud migration projects and related services, as they require a complete transition and a well-crafted plan.

However, the latest cloud migration tools can fully streamline the migration process. This is where Finsense Africa comes in to create a roadmap and consult on industry best practices before projects start.

Access to IT resources and services without the burden of owning and maintaining the underlying infrastructure is one of the many benefits of migrating to the cloud. However, the existing infrastructure should have capacity to support migration and seamless hybrid cloud.

Sources:

]]>

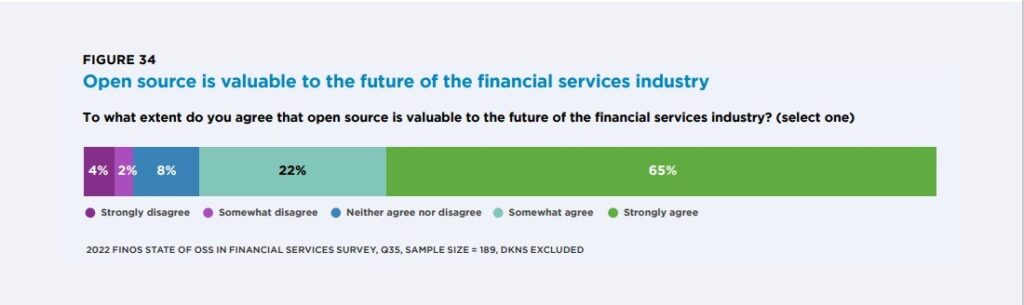

Top organizations use open source they include Google, Facebook, Microsoft, Amazon, IBM, Twitter, Red Hat, Uber, Airbnb, and Netflix.

Why are banks and hedge funds suddenly into open source. Past practices have indicated that banks are very competitive and cautious of their proprietary data.

Since they handle confidential data, they’ve been expected to keep secrets. For example, in 2009 Goldman Sachs had an employee jailed for allegedly stealing their proprietary software.

However, 8 years later in 2017 Goldman Sachs launched three of its latest open-source projects – Jrpip, Obevo and Tablasco – on GitHub. They also have an in-house language, Legend, that is now open source.

In the creation and use of open-source tech-based companies outperform financial businesses. For instance, Google has 70 open-source projects. The largest of them all is Android which is 75% of what all smart phones use.

Why Organizations Choose Open-Source

As a result, banks in 2023 will increasingly adopt open-source technology, as they are under pressure to innovate and remain competitive. This shift is driven by a desire to gain access to new and emerging technologies, such as machine learning and blockchain, to improve customer experience and reduce operating costs.

Banks are opting for open-source technology because

- Cost Savings: Open source saves costs by eliminating the need to pay for expensive proprietary software licenses and reducing development costs by leveraging existing open-source solutions.

- Customization: It can be customized to fit an organization’s unique needs, as opposed to proprietary software which may be more rigid.

- Security: Is more secure because vulnerabilities can be quickly identified and addressed by the community of developers.

- Innovation: Foster innovation by allowing organizations to collaborate and share ideas with others in the community.

- Reliability: Open source has proven to be reliable and stable in mission-critical applications. For example, the Linux operating system is widely used in mission-critical applications such as space missions and stock exchanges.

- Community Support: Open-source communities can guide and assist organizations with their projects, including bug fixes and development advice. The Apache web server project is an example of a project that benefits from a large and active developer community for ongoing support

- Future Outlook: More organizations are likely to adopt open-source solutions, like Red Hat Enterprise Linux, which provides a secure and stable operating system that can be tailored to meet specific business needs.

In addition, open-source software is flexible to customize to specific needs. This allows banks to develop innovative applications that leverage the latest technologies, such as AI and machine learning, to understand customer behavior and anticipate their financial needs.

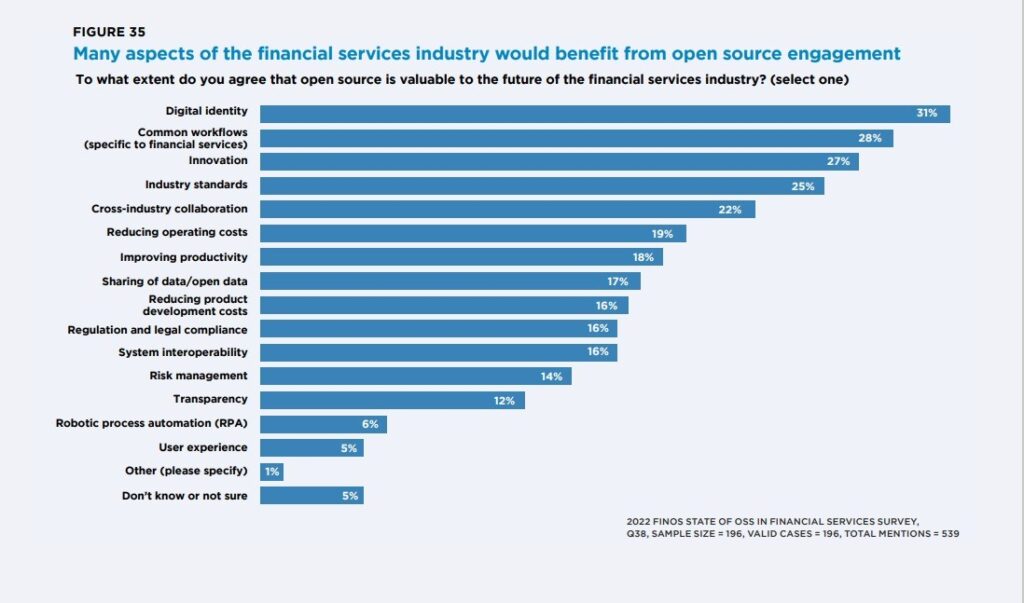

Real-Life examples of Open-Source Technology Adoption

One example of open-source technology being adopted by banks is the operating system Linux. Banks such as ING, UBS and JPMorgan Chase have implemented Linux powered systems to better manage their IT infrastructure. They use it to:

- Host their IT infrastructure and provide a secure computing environment.

- Develop custom applications, such as mobile banking and digital wallets.

Other open-source projects that are popular in the banking sector include:

- Apache Kafka, an event streaming platform

- Hadoop, a big data analytics platform

Historically banks have been hesitant to adopt open-source software; where software source code is shared and made freely available). With traditional vendors like IBM, TIBCO, Oracle strongly positioned in this industry, the move to open source has been slow.

In recent years, forced by a rapidly changing business, banks are transforming their IT organizations considerably, adopting new technologies and methodologies like Cloud, microservices, Open APIs, DevOps, Agile and Open Source. Because often the above adoptions enforce each other.

The Open-Source movement has reached maturity. While 5-10 years ago, it was associated with computer-nerds, idealists and small start-ups, today it is mainstream. The recent acquisitions of open-source companies by large established corporate tech-vendors is the best proof of this evolution:

- SalesForce bought MuleSoft for $6.5 billion in March 2018

- Microsoft bought GitHub for $7.5 billion in October 2018.

- IBM purchased Red Hat for $34 billion in 2019

At the same time these incumbent tech players are adopting open-source strategies themselves. For example, Microsoft, initially one of the most guarded, has adopted an open-source strategy, since Satya Nadella became CEO in 2014. Examples of its open-source technologies include:

- Edge: The Edge browser is switching to the Google based open-source chromium platform

- .NET framework: the full .NET framework was open-sourced on Git-Hub

- Windows 10: built on open-source Progressive Web App technology

- Windows 11: analysts speculate that the NT kernel would be (gradually) replaced by the Linux kernel

- Azure platform: the most used operating system on Microsoft Azure is not Windows Server, but rather Linux

- Open-source contribution: Microsoft has become the largest contributor to open source in the world. It is more active than the second most active contributor, Google. There are 20,000 Microsoft employees on GitHub and over 2,000 open-source projects

The Different Stages of Open-Source Adoption

Open-source software has many benefits for banks, but it requires a cultural shift in the whole organization, which takes time and intensive change management.

Banks can start adopting open-source software in different ways. They can start by using open-source software where possible, either as full solutions or as components they combine to build custom applications.

As they become more familiar with open-source software, banks can start contributing back to the community by identifying bugs and implementing valuable features. By doing so, banks improve their corporate image and benefit from future testing and extensions by the community.

The final step is to open the bank’s existing proprietary software, which is the most complex and time intensive.

First, banks fear their code will be scrutinized in public, resulting in a brand risk and potentially exposing security issues. Additionally, some bank leaders may fear giving away competitive advantage.

Second, depending on the kind of open-source software. How complex is it? Banks should first gain experience with low-level abstraction open-source software, like:

- Databases (MySQL, Mongo DB, Cassandra and Postgres),

- Middleware (WSO2, Kafka, Apache Camel, Envoy, Istio)

- Operating systems (Linux and Kubernetes)

Gradually they can move up the stack to higher level of abstractions, like:

- Business process (jBPM)

- Task management tools

Finally, they can use also open source for the financial core processes. These include, Cyclos, Mifos X / Apache Fineract, MyBanco, Jainam Software, OpenCBS, OpenBankProject, Cobis, OpenBankIT, Mojaloop).

Contributing to Open-Source

Ultimately the banks’ software should have at its core open-source software, except for solutions exclusively offered via SaaS.

Many banks already use open-source software and prefer it over proprietary software. More banks are contributing to open-source projects or open-sourcing their own software. Some examples of such banks include:

- Capital One’s, one of the largest credit card companies in the US, has been on a digital transformation journey over the past 6 years.

- Goldman Sachs recently open sourced its proprietary data modeling program Alloy

- J.P. Morgan Chase released code on GitHub for multiple initiatives; its Quorum blockchain project.

- Deutsche Bank open-sourced multiple projects, like Plexus Interop (from its electronic trading platform Autobahn) or Waltz (IT estate management

The Dilemma of Open-Sourcing In-House Software

The move of some banks to open-source proprietary software seems strange at first sight, as intelligent software has become the competitive edge of any bank. Nonetheless banks have a lot to gain in using (adopting) open source and contributing to it:

5 Benefits of using open-source software:

- Lower costs: avoid the exuberant annual software license costs paid to software vendors.

- Reduce time-to-market: allowing developers to bolt together pre-existing modules rather than having to create them all from scratch, allows to considerably reduce development time.

- Easily customizable: open-source software can be customized, allowing to provide the golden means between buying a software package from a vendor (quick time-to-market, but limited customization possibilities) and internally custom-built software.

- No vendor lock-in

- Lower learning curve for new joiner

7 Benefits of Contributing to Open-Source Software:

- Good for corporate image through giving back to the community.

- Transparency: open-source software is intrinsically more secure than proprietary software, where the code is kept a secret.

- Easier hiring of resources, as IT resources like to work on open-source and good potential candidates can be identified by looking at public commits of externals to the bank’s open-source projects

- Motivation: often IT resources at banks feel a lack of social commitment. Contributing back to open sources can give them a feeling of pride and giving back to community.

- Cultural accelerator: open-source communities promote collaboration, almost always remote and often across different time zones and cultures. Collaborating in such an environment will make the bank IT department better and more adapted for future evolutions.

- Gain from testing and extensions built by contributors outside the bank

- Facilitates collaboration between different banks on shared concerns like KYC (Know Your Customer) and AML (Anti-Money Laundering)

5 Fears of Making In-House Software Open-Source

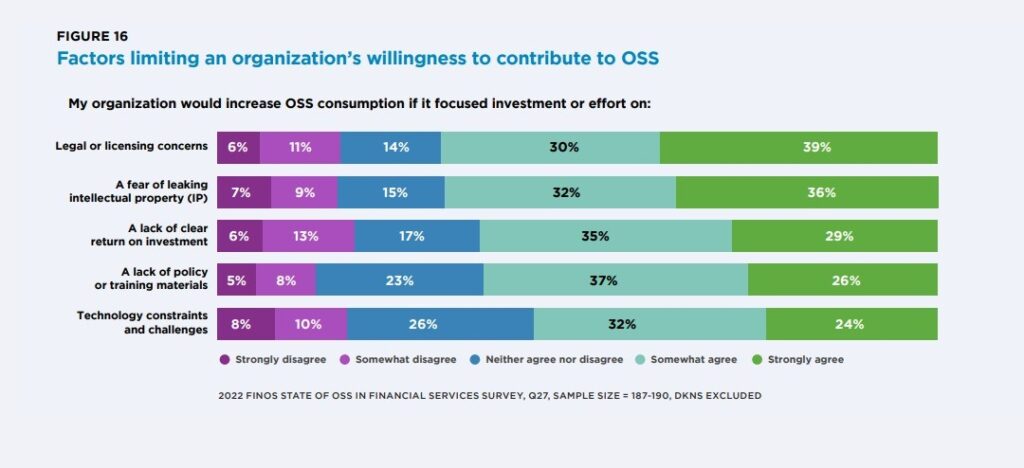

Even though open source has many advantages, there are still some banks that are hesitant to use it. These banks are especially hesitant to contribute to open source or share their own software. Here are some reasons:

- Contractual and legal: Various types of open-source software license models exist which can make it challenging for large banks to comply with all the terms and conditions. But tools like FOSSA, DejaCode, WhiteSource, Code Janitor help monitor and follow-up the compliance on open-source licenses.

- Support: Banks worry about a lack of support when using open-source tools. However, many open-source tools offer corporate support. If not, the bank IT teams should engage with the open-source community to resolve an issue.

- Compliance and security: There are risks when publishing source code on the internet that criminals can find loopholes in the code. While open-sourcing code ultimately leads to more secure software.

- Losing Competitive advantage: Most internal banking software is commodity software that doesn’t provide any differentiation. Open sourcing these applications can free up resources to work on real value-added services.

- Brand risk: Banks are concerned that open-sourcing bad software can harm corporate branding

How Does Open-Source affect FinTech

If banks start using a lot of open-source software, will FinTech’s new software services offer to banks, fail?

Fortunately, fintech has already moved from an annual license model to newer partnership models. Using cloud technology and Open APIs has made it hard to justify annual licenses.

Partnership models are now used instead of software license costs. These include:

- PaaS (Platform as a Service), SaaS (Software as a Service) and Baas (Business as a Service) models

- “Open core” models (also called Dual licensing). Here, the core of the software is delivered for free and open source. However, the tooling for large corporations are license based

- Support model: pay a party for access to a support desk and providing updates on when new versions should be installed

- Service model for profits; training services, implementation services, customizations to the open-source software at request of the banks.

Making an open system of collaboration between FinTech and banks will lead to better services for everyone. Banks should understand that technology is important for their business.

They should learn from the big technology companies by hiring the best people, using existing software, and supporting quick changes with DevOps and Agile methods. Banks can use open-source strategies to achieve this goal.

References:

]]>

The future of finance is changing rapidly. Over the past decade, advanced machine learning has taken over many tasks that humans previously performed.

From self-driving cars to smart phones, these technologies have advanced at an exponential rate. Now, new technologies are emerging that use artificial intelligence (AI) to complement existing processes and provide insights that allow people to make better decisions.

In November 30th 2022 Open AI launched ChatGPT. By December 4th the open platform had over a million users, breaking the records other popular platforms.

Facebook took up to 10 months before accumulation one million users. Twitter took up to 2 years to get to a million users. Last but not least, TikTok, The Open AI innovation received up to 1million users in 24 hours.

The amount of posts on LinkedIn and YouTube about the supposed solution to all repetitive tasks were uncontrollable. Experts have mixed reactions to the possibilities of AI, some agree that it’s impact has been far-reaching and positive so far.

Understanding the impact of AI on finance services

Today, AI is an integral part of our lives. From self-driving cars and video games to Netflix’s recommendation engine and Facebook’s algorithm, AI is no longer just in our pocket (it’s in everything).

But what has this changed for the financial services industry? What are the biggest risks and opportunities that it can bring? And how will AI impact your role in finance moving forward?

According to the mckinsey-tech-trends-outlook-2022-research-overview.pdf Applied AI received $165 billion investment funding in 2022.

Through the machine learning Canonical stack, we are witnessing the democratization of AI. The use of artificial intelligence will aid in the management of credit card fraud risks and the prevention of losses.

The benefits of AI in the financial industry

The benefits of AI in the financial industry are manifold. The technology is expected to reduce the costs of customer service and improve efficiencies across all stages of the process. It will also enable banks to enhance their product offerings and offer tailor-made services to customers.

The introduction of AI technologies is expected to provide financial institutions with a competitive advantage, enabling them to better adapt to the rapidly changing market environment.

AI has been a major buzzword for years now and has become a popular topic of discussion as we aim to reinvent our future. As AI becomes mainstream here are some benefits the financial industry will enjoy:

● Lower costs by automating repetitive tasks that take up a lot of time.

● Improved customer experience through personalized recommendations and personalization.

● Improved efficiency by utilizing machine learning algorithms to make better decisions.

● Increase in revenue through improved market research and analysis.

The financial services industry is undergoing a transition as AI becomes more pervasive. It’s no longer about the mythical replacing humans with machines, but leveraging artificial intelligence to better serve customers and improve operations.

In fact, AI can play a key role in helping banks and other financial institutions create value for their customers and deliver a better customer experience.

Here are some ways AI will improve the customer’s journey:

1. Improving customer service: Analyzing data from transactions and account activity to identify trends that can be used for predictive analytics. For example, AI could analyze historical transaction data and identify trends such as when customers purchase certain products or make payments on time. This allows banks to predict which customers might need assistance in the future.

2. Automating tasks: AI can automate many of the tasks currently done manually, freeing up employees for customer support. For example, an AI system might be able to integrate information from multiple sources, such as account statements, credit scores and social media posts, into a single report that shows the entire picture of your finances in real time.

3. Predicting client behavior: With enough data about customers’ past spending habits, an AI system could predict what they’re likely to want in the future.

4. Decision support systems, which are based on data analysis and machine learning algorithms, can assist in making financial decisions (DSS).

The impact of AI on finance is that it can automate many manual tasks, freeing up time and resources for humans to focus on higher-value activities. These include:

1. Predicting future events, such as the weather or stock prices, which could be used to make better investment decisions.

2. Creating original content, such as articles and videos, which can be shared with customers in order to drive engagement and loyalty.

3. Insights into customers’ behavior and preferences, which will help them understand how best to serve them. AI can analyze large amounts of financial data to forecast future outcomes or trends, a process known as advanced analytics or big data analytics (aka BDaaS).

Predictions for the adoption of AI in 2023

Artificial intelligence (AI) leaders, consultants and vendors looked at enterprise trends and made their predictions. After a whirlwind 2022, here are some quoted highlights of their insights:

1. AI will be at the core of connected ecosystems —–Vinod Bidarkoppa, CTO of Sam’s Club and SVP of Walmart

In 2023, we’re going to see more organizations start to move away from deploying siloed AI and ML applications that replicate human actions for highly specific purposes and begin building more connected ecosystems with AI at their core.

This will enable organizations to take data from throughout the enterprise to strengthen machine learning models across applications. Hence effectively creating learning systems that continually improve outcomes.

For enterprises to be successful, they need to think about AI as a business multiplier, rather than simply an optimizer.

2. AI will create meaningful coaching experiences— Zayd Enam, CEO, Cresta

Modern AI technology is already being used to help managers, coaches and executives with real-time feedback to better interpret inflection, emotion and more, and provide recommendations on how to improve future interactions.

The ability to interpret meaningful resonance as it happens is a level of coaching no human being can provide.

3. AI will empower more efficient DevOps – Kevin Thompson, CEO, Tricentis

When it comes to devops, experts are confident that AI is not going to replace jobs; rather, it will empower developers and testers to work more efficiently. AI integration is augmenting people and empowering exploratory testers to find more bugs and issues upfront, streamlining the process from development to deployment.

In 2023, we’ll see already-lean teams working more efficiently and with less risk as AI continues to be implemented throughout the development cycle.

“Specifically, AI-augmentation will help inform decision-making processes for devops teams by finding patterns and pointing out outliers, allowing applications to continuously ‘self-heal’ and freeing up time for teams to focus their brain power on the tasks that developers actually want to do and that are more strategically important to the organization.”

4. AI investments will move to fully-productized applications — Amr Awadallah, CEO, Vectara

There will be less investment within Fortune 500 organizations allocated to internal ML and data science teams to build solutions from the ground up. It will be replaced with investments in fully productized applications or platform interfaces to deliver the desired data analytic and customer experience outcomes in focus.

That’s because in the next five years, nearly every application will be powered by LLM-based neural network-powered data pipelines to help classify, enrich, interpret and serve.

But productization of neural network technology is one of the hardest tasks in the computer science field right now. It is an incredibly fast-moving space that without dedicated focus and exposure to many different types of data and use cases, it will be hard for internal-solution ML teams to excel at leveraging these technologies.

References

ai-trends-for-2023-industry-experts-and-chatgpt-ai-make-their-predictions/

AI is Changing Financial Services Delivery

AI is transforming the way businesses operate and invest, enabling them to identify patterns, make predictions, create rules, automate processes, and communicate more efficiently.

It is at the top of the agenda for financial services, as customers are becoming more informed and expect transparent as well as consistent and reliable services.

To generate value, banks and financial services organizations should be smart about choosing appropriate use cases and technologies. These use cases should outline what “measurable” success looks like both in the short and long term and should be assessed and prioritized based on the level of business impact and technological feasibility.

For example, classification type problems are commonly seen in banking, and AI-driven call center compliance automation is an example of using AI to classify high-risk calls for further review.

Mariette van Niekerk leads the Data Science & AI practice of Deloitte New Zealand’s Risk Advisory team, using a variety of machine learning / AI technologies to detect and manage fraud and operational risks.

She is a seasoned data scientist and project manager with a 12-year track record of delivering cross-industry operations research and artificial intelligence solutions. She recommends breaking the high-level plan for full roll-out down into smaller phases that deliver benefits early.

References

“How AI Is Shaping the Future of Financial Services.” Deloitte New Zealand, 18 May 2022

Challenges of Adopting AI in the Financial Industry

The use of AI in global banking is estimated to grow from a $41.1 billion business in 2018 to $300 billion by 2030. Traditional financial services companies have two objectives to fulfill with AI: speed, flexibility, and agility, and adhering to compliance standards and regulatory requirements.

However, big challenges remain in building responsible and ethical AI systems, and traditional financial institutions struggle to deploy in-depth AI capabilities to truly harness its full potential.

These include:

Data quality and weak core structures make it difficult for AI and ML systems to identify overlapping and conflicting entries.

Lack of support for AI-specific scale and volume and can even show biased results when written by developers with a biased mind.

Lack of standard processes and guidelines for AI in the financial domain.

The lack of talent, budget constraints.

Significant commitment toward AI investment. It is important to consider the context, use case, and type of AI model implemented to analyse the appropriate approach while collaborating or upscaling core tech systems.

The Economist’s research team found that 86% of Financial Service executives plan to increase AI-related investment over the next five years, with the strongest intent expressed by firms in the APAC and North American regions.

Businesses that scale with AI over time, will enjoy an unwavering focus on compliance, customer satisfaction, and retention.

References

AI Adoption Challenges in Traditional Financial Services Companies, 7 Mar. 2022

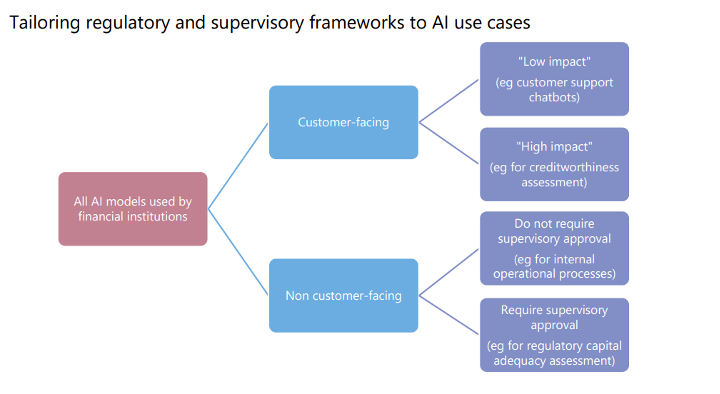

Role of Government and Regulatory Bodies in Financial Services AI

AI, including machine learning (ML), can improve the delivery of financial services as well as operational and risk management processes. Financial authorities are encouraging financial sector innovation and the use of new technologies.

As a result, sound regulatory frameworks are required to maximize benefits and minimize risks from these new technologies. There are AI governance frameworks or principles that apply across industries, and several financial authorities have begun developing similar frameworks for the financial sector.

These frameworks are based on general guiding principles such as dependability, accountability, transparency, fairness, and ethics. Financial regulators are under increasing pressure to provide more concrete, practical guidance.

Existing governance, risk management, and development and operation requirements for traditional models also apply.

Businesses can benefit from this financial industry behemoth of JP Morgan. This section examines how one of the largest banks, JP Morgan Chase & Co. is using artificial intelligence to tackle a slew of mundane tasks.

The multinational is unwavering in its commitment to lowering costs, increasing operational efficiency, and

improving the client experience, and it has been an early adopter of disruptive technologies such as Blockchain.

They established a center of excellence within Intelligent Solutions in 2016 to investigate and implement a

growing number of use cases for machine learning applications across the organization.

They have a document review system, in which corporate lawyers analyse large amounts of data and sort and identify important pieces for litigation, which is one of the legal profession’s pain points.

According to a McKinsey & Co. study, nearly a quarter of lawyer work output can be automated. According to a study conducted by Frank Levy at MIT and Dana Remus at the University of North Carolina School of Law,

implementing machine learning could reduce lawyers’ billable hours by about 13%.

JP Morgan has also implemented a program called COiN, which uses unsupervised machine learning to automate the contract document review process. The primary technique employed is image recognition, and the algorithm can extract 150 relevant attributes from annual commercial credit agreements in seconds, as opposed to 360,000 person-hours for manual review.

COiN is proving to be more cost-effective, efficient, and error free, and the company is committed to its technology hubs for teams specializing in big data, robotics, and cloud infrastructure in order to find new revenue streams while reducing expenses and risks.

References:

“AI in Banking: A JP Morgan Case Study and Takeaway for Businesses.”

Conclusion

In conclusion, the current state of Artificial Intelligence in banking is rapidly evolving and has the potential to greatly improve the efficiency and accuracy of financial services. AI-powered solutions can help banks with tasks ranging from fraud detection to customer service and personalization.

However, the implementation of AI in the banking sector must be done with caution and proper regulations in place to ensure the safety and privacy of customers’ data and to prevent any potential biases in decision making.

As AI continues to mature, it is expected to bring significant benefits to the banking industry and its customers, and it is essential for banking executives to stay informed and proactive in incorporating AI technology into

Source: Atul Kumal from Linkedin

APIs can bring value to a company by helping with innovation and development, but only a few can make money directly. A good API strategy can help simplify things and save money in the long run. To build a successful API strategy, consider these steps:

- Decide on the strategy based on the business, target audience, and API usage.

- Organize APIs based on data sensitivity, importance, and usage.

- Make sure the APIs you choose are valuable.

- Set up different portals, security measures, and paths for internal and external users.

- Measure the success of your APIs using metrics.

- Have a service level agreement policy that considers your backend capacity.

Having a clear API strategy can help simplify things and save money in the long term. It also has these benefits:

- Helps you understand things faster

- Gives you confidence in your investments

- Makes things more flexible

- Prevents things from getting too complicated

- Let’s communicate changes easily across the company.

Source is API Expert David Roldan

An Introduction to Monetizing APIs in the Banking Industry

The African banking industry has been rapidly evolving in recent years, with a growing emphasis on digital transformation and innovative financial technology solutions.

One area that is gaining traction in the region is the monetization of APIs (Application Programming Interfaces) in the banking sector.

APIs are the building blocks that enable financial institutions to expose their systems and data to third-party developers, who can then create new financial products and services based on this data.

By monetizing their APIs, banks can generate new revenue streams, enhance their brand, and improve customer engagement.

An example of this in Africa is the Kenya Commercial Bank (KCB), which has been a leader in the use of open APIs in the region. The bank has made its APIs available to developers, allowing them to create innovative financial products and services based on KCB’s data and systems.